Mathematical Extensions: Difference between revisions

imported>Pascal |

imported>Pascal |

||

| Line 133: | Line 133: | ||

==== AST requirements ==== | ==== AST requirements ==== | ||

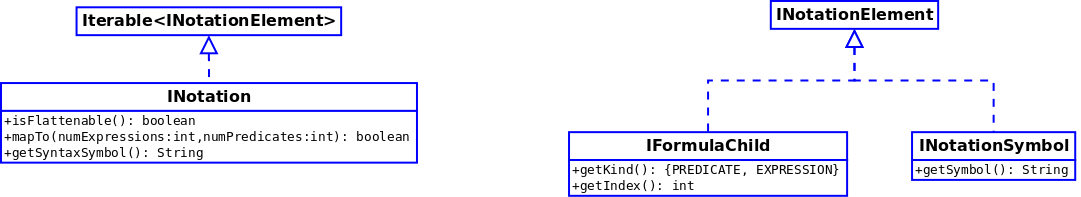

The following hard-coded concepts need to be reified for supporting extensions in the AST. An extension instance will then provide these reified informations to an extended formula class for it to be able to fulfil its API. It is the expression of the missing information for a | The following hard-coded concepts need to be reified for supporting extensions in the AST. An extension instance will then provide these reified informations to an extended formula class for it to be able to fulfil its API. It is the expression of the missing information for a <tt>ExtendedExpression</tt> (resp. <tt>ExtendedPredicate</tt>) to behave as a <tt>Expression</tt> (resp. <tt>Predicate</tt>). It can also be viewed as the parametrization of the AST. | ||

===== Notation ===== | ===== Notation ===== | ||

| Line 149: | Line 149: | ||

On the "<math>\lozenge</math>" infix operator example, the iterable notation would return sucessively: | On the "<math>\lozenge</math>" infix operator example, the iterable notation would return sucessively: | ||

* a | * a <tt>IFormulaChild</tt> with index 1 | ||

* the | * the <tt>INotationSymbol</tt> "<math>\lozenge</math>" | ||

* a | * a <tt>IFormulaChild</tt> with index 2 | ||

* the | * the <tt>INotationSymbol</tt> "<math>\lozenge</math>" | ||

* … | * … | ||

* a | * a <tt>IFormulaChild</tt> with index <math>n</math> | ||

For the iteration not to run forever, the limit <math>n</math> needs to be known: this is the role of the | For the iteration not to run forever, the limit <math>n</math> needs to be known: this is the role of the <tt>mapsTo()</tt> method, which fixes the number of children, called when this number is known (i.e. for a particular formula instance). | ||

'''Open question''': how to integrate bound identifier lists in the notation ? | '''Open question''': how to integrate bound identifier lists in the notation? | ||

We may make a distinction between fixed-size notations (like n-ary operators for a given n) and variable-size notations (like associative infix operators). | We may make a distinction between fixed-size notations (like n-ary operators for a given n) and variable-size notations (like associative infix operators). | ||

| Line 178: | Line 178: | ||

* expression variables (predicate variables already exist) | * expression variables (predicate variables already exist) | ||

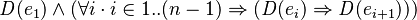

* special expression variables and predicate variables that denote a particular formula child (we need to refer to <math>e_1</math> and <math>e_i</math> in the above example) | * special expression variables and predicate variables that denote a particular formula child (we need to refer to <math>e_1</math> and <math>e_i</math> in the above example) | ||

* a | * a <tt>parse()</tt> method that accepts these special meta variables and the <math>\mathit{D}</math> operator and returns a <tt>Predicate</tt> (a WD Predicate Pattern) | ||

* a | * a <tt>makeWDPredicate(aWDPredicatePattern, aPatternInstantiation)</tt> method that makes an actual WD predicate | ||

===== Type Check ===== | ===== Type Check ===== | ||

| Line 194: | Line 194: | ||

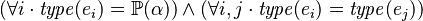

* type variables (<math>\alpha</math>) | * type variables (<math>\alpha</math>) | ||

* the above-mentioned expression variables and predicate variables | * the above-mentioned expression variables and predicate variables | ||

* a | * a <tt>parse()</tt> method that accepts these special meta variables and the <math>\mathit{type}</math> operator and returns a <tt>Predicate</tt> (a Type Predicate Pattern) | ||

* a | * a <tt>makeTypePredicate(aTypePredicatePattern, aPatternInstantiation)</tt> method that makes an actual Type predicate | ||

===== Type Solve ===== | ===== Type Solve ===== | ||

| Line 205: | Line 205: | ||

In addition to the requirements for Type Check, the following features are needed: | In addition to the requirements for Type Check, the following features are needed: | ||

* a | * a <tt>parse()</tt> method that accepts special meta variables and the <math>\mathit{type}</math> operator and returns an <tt>Expression</tt> (a Type Expression Pattern) | ||

* a | * a <tt>makeTypeExpression(aTypeExpressionPattern, aPatternInstantiation)</tt> method that makes an actual Type expression | ||

==== Static Checker requirements ==== | ==== Static Checker requirements ==== | ||

Revision as of 16:15, 25 March 2010

Currently the operators and basic predicates of the Event-B mathematical language supported by Rodin are fixed. We propose to extend Rodin to define basic predicates, new operators or new algebraic types.

Requirements

User Requirements

- Binary operators (prefix form, infix form or suffix form).

- Operators on boolean expressions.

- Unary operators, such as absolute values.

- Note that the pipe, which is already used for set comprehension, cannot be used to enter absolute values (in fact, as in the new design the pipe used in set comprehension is only a syntaxic sugar, the same symbol may be used for absolute value. To be confirmed with the prototyped parser. It may however be not allowed in a first time until backtracking is implemented, due to use of lookahead making assumptions on how the pipe symbol is used. -Mathieu ).

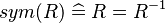

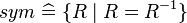

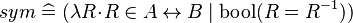

- Basic predicates (e.g., the symmetry of relations

).

).

- Having a way to enter such predicates may be considered as syntactic sugar, because it is already possible to use sets (e.g.,

, where

, where  ) or functions (e.g.,

) or functions (e.g.,  , where

, where  ).

).

- Quantified expressions (e.g.,

,

,  ,

,  ,

,  ).

). - Types.

- Enumerated types.

- Scalar types.

User Input

The end-user shall provide the following information:

- keyboard input

- Lexicon and Syntax.

More precisely, it includes the symbols, the form (prefix, infix, postfix), the grammar, associativity (left-associative or right associative), commutativity, priority, the mode (flattened or not), ... - Pretty-print.

Alternatively, the rendering may be determined from the notation parameters passed to the parser. - Typing rules.

- Well-definedness.

Development Requirements

- Scalability.

Towards a generic AST

The following AST parts are to become generic, or at least parameterised:

- Lexer

- Parser

- Nodes ( Formula class hierarchy ): parameters needed for:

- Type Solve (type rule needed to synthesize the type)

- Type Check (type rule needed to verify constraints on children types)

- WD (WD predicate)

- PrettyPrint (tag image + notation (prefix, infix, postfix) + needs parentheses)

- Visit Formula (getting children + visitor callback mechanism)

- Rewrite Formula (associative formulæ have a specific flattening treatment)

- Types (Type class hierarchy): parameters needed for:

- Building the type expression (type rule needed)

- PrettyPrint (set operator image)

- getting Base / Source / Target type (type rule needed)

- Verification of preconditions (see for example AssociativeExpression.checkPreconditions)

Vocabulary

An extension is to be understood as a single additional operator definition.

Tags

Every extension is associated with an integer tag, just like existing operators. Thus, questions arise about how to allocate new tags and how to deal with existing tags.

The solution proposed here consists in keeping existing tags 'as is'. They are already defined and hard coded in the Formula class, so this choice is made with backward compatibility in mind.

Now concerning extension tags, we will first introduce a few hypotheses:

- Tags_Hyp1: tags are never persisted across sessions on a given platform

- Tags_Hyp2: tags are never referenced for sharing purposes across various platforms

In other words, cross-platform/session formula references are always made through their String representation. These assumptions, which were already made and verified for Rodin before extensions, lead us to restrict further considerations to the scope of a single session on a single platform.

The following definitions hold at a given instant  and for the whole platform.

and for the whole platform.

Let  be the set of extensions supported by the platform at instant

be the set of extensions supported by the platform at instant  ;

;

let  denote the affectation of tags to a extensions at instant

denote the affectation of tags to a extensions at instant  (

( );

);

let  be the set of existing tags defined by the Formula class (

be the set of existing tags defined by the Formula class ( ).

).

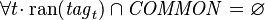

The following requirements emerge:

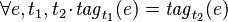

- Tags_Req1:

- Tags_Req2:

where

where  are two instants during a given session

are two instants during a given session - Tags_Req3:

The above-mentioned scope-restricting hypothesis can be reformulated into:  needs not be stable across sessions nor across platforms.

needs not be stable across sessions nor across platforms.

Formula Factory

The requirements about tags give rise to a need for centralising the  relation in order to enforce tag uniqueness.

The Formula Factory appears to be a convenient and logical candidate for playing this role. Each time an extension is used to make a formula, the factory is called and it can check whether its associated tag exists, create it if needed, then return the new extended formula while maintaining tag consistency.

relation in order to enforce tag uniqueness.

The Formula Factory appears to be a convenient and logical candidate for playing this role. Each time an extension is used to make a formula, the factory is called and it can check whether its associated tag exists, create it if needed, then return the new extended formula while maintaining tag consistency.

The factory can also provide API for requests about tags and extensions: getting the tag from an extension and conversely.

We also need additional methods to create extended formulæ. A first problem to address is: which type should these methods return ? We could define as many extended types as the common AST API does, namely ExtendedUnaryPredicate, ExtendedAssociativeExpression, and so on, but this would lead to a large number of new types to deal with (in visitors, filters, …), together with a constraint about which types extensions would be forced to fit into. It is thus preferable to have as few extended types as possible, but with as much parameterisation as can be. Considering that the two basic types Expression and Predicate have to be extensible, we come to add two extended types ExtendedExpression and ExtendedPredicate.

ExtendedExpression makeExtendedExpression( ? ) ExtendedPredicate makeExtendedPredicate( ? )

Second problem to address: which arguments should these methods take ? Other factory methods take the tag, a collection of children where applicable, and a source location. In order to discuss what can be passed as argument to make extended formulæ, we have to recall that the make… factory methods have various kinds of clients, namely:

- parser

- POG

- provers

(other factory clients use the parsing or identifier utility methods: SC modules, indexers, …)

Thus, the arguments should be convenient for clients, depending on which information they have at hand. The source location does not obviously seem to require any adaptation and can be taken as argument the same way. Concerning the tag, it depends on whether clients have a tag or an extension at hand. Both are intended to be easily retrieved from the factory. As a preliminary choice, we can go for the tag and adjust this decision when we know more about client convenience.

As for children, the problem is more about their types. We want to be able to handle as many children as needed, and of all possible types. Looking to existing formula children configurations, we can find:

- expressions with predicate children:

- expressions with expression children:

- predicates with predicate children:

- predicates with expression children:

- mixed operators:

, but it is worth noting that the possibility of introducing bound variables in extended formulæ is not established yet.

, but it is worth noting that the possibility of introducing bound variables in extended formulæ is not established yet.

Thus, for the sake of generality, children of both types should be supported for both extended predicates and extended expressions.

ExtendedExpression makeExtendedExpression(int tag, Expression[] expressions, Predicate[] predicates, SourceLocation location) ExtendedPredicate makeExtendedPredicate(int tag, Expression[] expressions, Predicate[] predicates, SourceLocation location)

Defining Extensions

An extension is meant to contain every information and behaviour required by:

- Keyboard

- (Extensible Fonts ?)

- Lexer

- Parser

- AST

- Static Checker

- Proof Obligation Generator

- Provers

Keyboard requirements

Kbd_req1: an extension must provide an association combo/translation for every uncommon symbol involved in the notation.

Lexer requirements

Lex_req1: an extension must provide an association lexeme/token for every uncommon symbol involved in the notation.

Parser requirements

According to the Parser page, the following informations are required by the parser in order to be able to parse a formula containing a given extension.

- symbol compatibility

- group compatibility

- symbol precedence

- group precedence

- notation (see below)

AST requirements

The following hard-coded concepts need to be reified for supporting extensions in the AST. An extension instance will then provide these reified informations to an extended formula class for it to be able to fulfil its API. It is the expression of the missing information for a ExtendedExpression (resp. ExtendedPredicate) to behave as a Expression (resp. Predicate). It can also be viewed as the parametrization of the AST.

Notation

The notation defines how the formula will be printed. In this document, we use the following convention:

is the i-th child expression of the extended formula

is the i-th child expression of the extended formula is the i-th child predicate of the extended formula

is the i-th child predicate of the extended formula

Example: infix operator " "

"

We define the following notation framework:

On the " " infix operator example, the iterable notation would return sucessively:

" infix operator example, the iterable notation would return sucessively:

- a IFormulaChild with index 1

- the INotationSymbol "

"

" - a IFormulaChild with index 2

- the INotationSymbol "

"

" - …

- a IFormulaChild with index

For the iteration not to run forever, the limit  needs to be known: this is the role of the mapsTo() method, which fixes the number of children, called when this number is known (i.e. for a particular formula instance).

needs to be known: this is the role of the mapsTo() method, which fixes the number of children, called when this number is known (i.e. for a particular formula instance).

Open question: how to integrate bound identifier lists in the notation?

We may make a distinction between fixed-size notations (like n-ary operators for a given n) and variable-size notations (like associative infix operators). While fixed-size notations would have no specific expressivity limitations (besides parser conflicts with other notations), only a finite set of pre-defined variable-size notation patterns will be proposed to the user. For technical reasons related to the parser,

The following features need to be implemented to support the notation framework:

- special meta variables that represent children (

,

,  )

) - a notation parser that extracts a fixed-size notation pattern from a user-input String

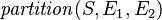

Well-Definedness

WD predicates also are user-provided data.

Example:

In order to process WD predicates, we need to add the following features to the AST:

- the

operator

operator - expression variables (predicate variables already exist)

- special expression variables and predicate variables that denote a particular formula child (we need to refer to

and

and  in the above example)

in the above example) - a parse() method that accepts these special meta variables and the

operator and returns a Predicate (a WD Predicate Pattern)

operator and returns a Predicate (a WD Predicate Pattern) - a makeWDPredicate(aWDPredicatePattern, aPatternInstantiation) method that makes an actual WD predicate

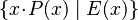

Type Check

An extension shall give a type rule, which consists in:

- type check predicates (addressed in this very section)

- a resulting type expression (only for expressions)

Example:

Type checking can be reified provided the following new AST features:

- the

operator

operator - type variables (

)

) - the above-mentioned expression variables and predicate variables

- a parse() method that accepts these special meta variables and the

operator and returns a Predicate (a Type Predicate Pattern)

operator and returns a Predicate (a Type Predicate Pattern) - a makeTypePredicate(aTypePredicatePattern, aPatternInstantiation) method that makes an actual Type predicate

Type Solve

This section addresses type synthesizing for extended expressions (the resulting type part of a type rule). It is given as a type expression pattern, so that the actual type can be computed from the children.

Example:

In addition to the requirements for Type Check, the following features are needed:

- a parse() method that accepts special meta variables and the

operator and returns an Expression (a Type Expression Pattern)

operator and returns an Expression (a Type Expression Pattern) - a makeTypeExpression(aTypeExpressionPattern, aPatternInstantiation) method that makes an actual Type expression

Static Checker requirements

TODO

Proof Obligation Generator requirements

TODO

Provers requirements

TODO

Extension compatibility issues

TODO

User Input Summarization

Identified required data entail the following user input:

TODO

Impact on other tools

Impacted plug-ins (use a factory to build formulæ):

- org.eventb.core

- In particular, the static checker and proof obligation generator are impacted.

- org.eventb.core.seqprover

- org.eventb.pp

- org.eventb.pptrans

- org.eventb.ui

Identified Problems

The parser shall enforce verifications to detect the following situations:

- Two mathematical extensions are not compatible (the extensions define symbols with the same name but with a different semantics).

- A mathematical extension is added to a model and there is a conflict between a symbol and an identifier.

- An identifier which conflicts with a symbol of a visible mathematical extension is added to a model.

Beyond that, the following situations are problematic:

- A formula has been written with a given parser configuration and is read with another parser configuration.

- As a consequence, it appears as necessary to remember the parser configuration.

- The static checker will then have a way to invalid the sources of conflicts (e.g., priority of operators, etc).

- The static checker will then have a way to invalid the formula upon detecting a conflict (name clash, associativity change, semantic change...) mathieu

- A proof may free a quantified expression which is in conflict with a mathematical extension.

- SOLUTION #1: Renaming the conflicting identifiers in proofs?

Open Questions

New types

Which option should we prefer for new types?

- OPTION #1: Transparent mode.

- In transparent mode, it is always referred to the base type. As a consequence, the type conversion is implicitly supported (weak typing).

- For example, it is possible to define the DISTANCE and SPEED types, which are both derived from the

base type, and to multiply a value of the former type with a value of the latter type.

base type, and to multiply a value of the former type with a value of the latter type.

- OPTION #2: Opaque mode.

- In opaque mode, it is never referred to the base type. As a consequence, values of one type cannot be converted to another type (strong typing).

- Thus, the above multiplication is not allowed.

- This approach has at least two advantages:

- Stronger type checking.

- Better prover performances.

- It also has some disadvantages:

- need of extractors to convert back to base types.

- need of extra circuitry to allow things like

where

where  are of type DISTANCE

are of type DISTANCE

- OPTION #3: Mixed mode.

- In mixed mode, the transparent mode is applied to scalar types and the opaque mode is applied to other types.

Scope of the mathematical extensions

- OPTION #1: Project scope.

- The mathematical extensions are implicitly visible to all components of the project that has imported them.

- OPTION #2: Component scope.

- The mathematical extensions are only visible to the components that have explicitly imported them. However, note that this visibility is propagated through the hierarchy of contexts and machines (EXTENDS, SEES and REFINES clauses).

- An issue has been identified. Suppose that ext1 extension is visible to component C1 and ext2 is visible to component C2, and there is no compatibility issue between ext1 and ext2. It is not excluded that an identifier declared in C1 conflict with a symbol in ext2. As a consequence, a global verification is required when adding a new mathematical extension.

Bibliography

- J.R. Abrial, M.Butler, M.Schmalz, S.Hallerstede, L.Voisin, Proposals for Mathematical Extensions for Event-B, 2009.

- This proposal consists in considering three kinds of extension:

- Extensions of set-theoretic expressions or predicates: example extensions of this kind consist in adding the transitive closure of relations or various ordered relations.

- Extensions of the library of theorems for predicates and operators.

- Extensions of the Set Theory itself through the definition of algebraic types such as lists or ordered trees using new set constructors.